Abstraction is the invisible idea that makes modern computing possible. From smartphones to software, abstraction allows humans to manage overwhelming complexity by hiding unnecessary details. This article explores why abstraction is not just a programming concept, but the foundation of computer science itself.

If you've ever driven a car without knowing how a combustion engine works, or sent a text message without understanding how radio waves carry data, you've experienced abstraction. This invisible architecture shapes modern life and is especially central to computer science.

Abstraction may be the most important concept you've never heard of.

What Is Abstraction?#

At its core, abstraction is the art of hiding complexity. It's about creating simplified interfaces that allow us to interact with complex systems without needing to understand every detail of how they work.

Consider a light switch. When you flip it, you don't think about the electricity flowing through wires, the power grid distributing electricity from a plant miles away, or the physics behind how a filament glows when heated. You simply think: "I want light," and you flip the switch. The switch acts as an abstraction—a simple interface that hides a complex system.

Abstraction is not about oversimplifying or hiding information. It is about managing complexity by dividing systems into separate layers. Each layer handles specific concerns and presents a clean interface to the layer above it. This way, we can build systems so complex that no single person needs to understand every detail.

The Layers of Abstraction in Computing#

Computer systems are built on a remarkable tower of abstractions, each one resting on the layer below it. At the base, there's raw physics: electrons moving through silicon, magnetic fields storing data, and light pulses traveling through fiber-optic cables. These are the concerns of electrical engineers and physicists.

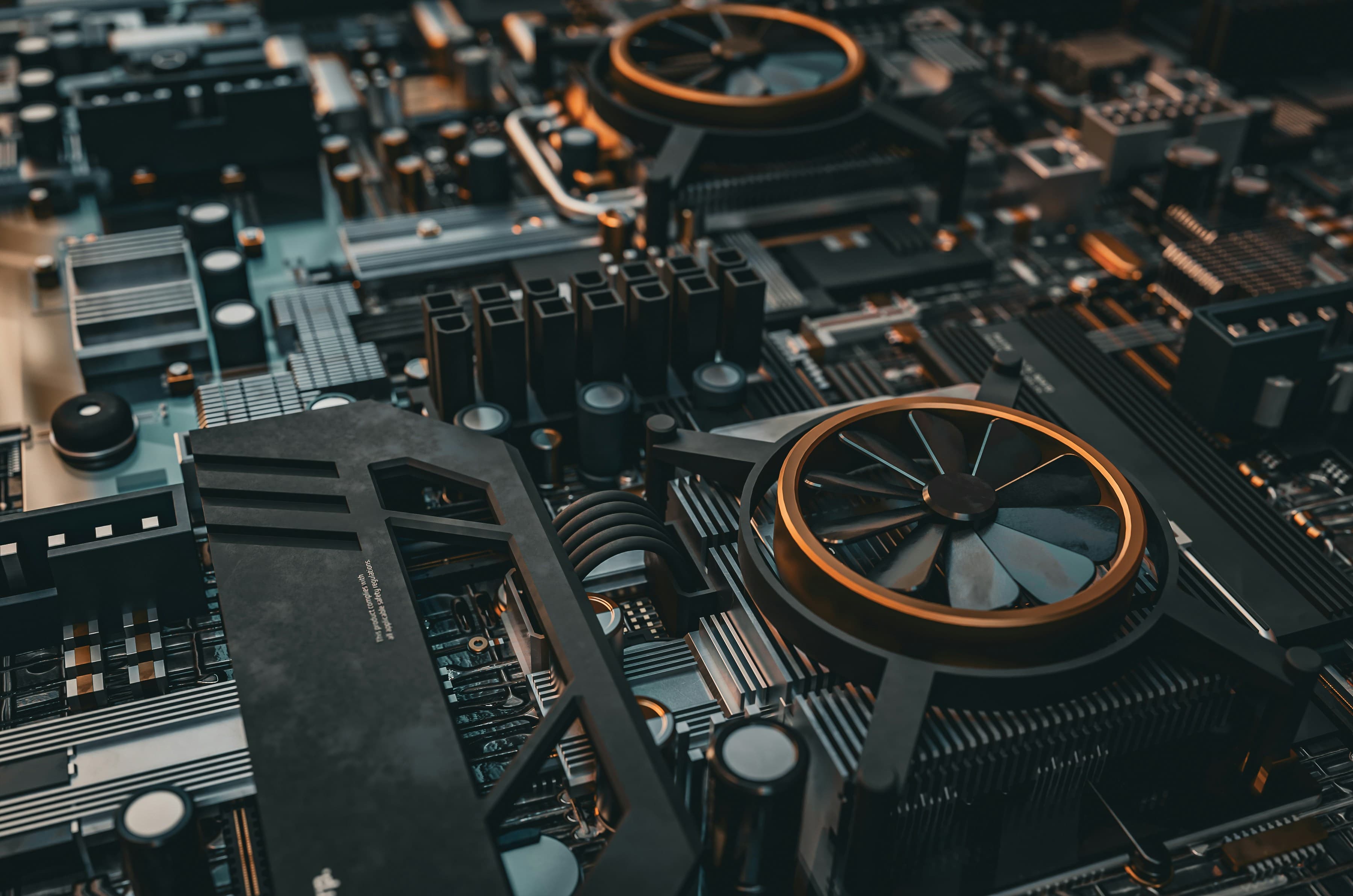

Above that sits the hardware layer: processors, memory chips, storage devices. These components are themselves abstractions, hiding the quantum mechanical behavior of semiconductors behind concepts like transistors and logic gates.

Next comes the operating system, perhaps the most impressive example of abstraction in computing. Systems like Windows, macOS, and Linux each present a unified view of your computer's resources—memory, storage, processing power—even though underneath they manage billions of operations across countless hardware components. When you save a file, you don't specify which sectors on your hard drive to use. You simply click "Save," and the operating system handles the rest.

Programming languages add another layer. When a developer writes code in Python or JavaScript, they're working with abstractions like variables and functions instead of manually managing memory addresses or writing machine code in binary(1 & 0). The language handles the translation.

Finally, at the top, we have applications—the apps and websites you use every day. When you scroll through social media or edit a photo, you're interacting with the highest level of abstraction. Beneath that simple swipe or click are millions of lines of code, distributed across thousands of computers, all working together.

Why Abstraction Matters#

Without abstraction, modern computing would not just be impractical—it would be impossible.

Consider your smartphone. It contains more computing power than the systems that guided Apollo 11 to the moon. It connects to networks and satellites, renders high-definition graphics, processes voice commands, and runs dozens of apps simultaneously—all in your pocket. No single person understands every detail of how all these pieces work together. And they don't need to. Each component is designed with clear abstractions that allow different teams to work independently on different layers.

This is the power of abstraction: it enables collaboration and scalability. One team can improve the battery technology while another optimizes the operating system, and a third develops new apps. They do not need to coordinate every detail because the abstractions between layers remain stable.

Abstraction also drives innovation. When you don't have to reinvent the wheel every time, you can focus on building new things. Early programmers had to write code that directly controlled hardware. Today’s developers can build complex applications in days because they're standing on a tower of abstractions built over decades. They're not smarter than early programmers; they just have better abstractions to work with.

The Double-Edged Nature#

Abstraction does come with trade-offs. When complexity is hidden, we rely on systems to work as they should. For example, if your car’s engine light comes on, you probably can’t fix it yourself the way people could with older cars. Modern systems are simply too complicated and abstract. In many cases, we give up understanding so we can do more.

There's also the risk of "leaky abstractions"—moments when the underlying complexity breaks through the simplified interface. If your computer freezes, you might have to learn about memory management. If a website loads slowly, you may need to understand server architecture. These moments reveal that abstractions are helpful fictions, not absolute truths.

Yet even with these limitations, abstraction remains essential. The alternative isn't a world where everyone understands everything. Instead, it would be a world with far less capability, where systems are simple enough to understand—but too limited to do much of interest.

Building on Invisibility#

We often take abstraction for granted because good abstraction is invisible. You don't notice it until it's absent. Try to imagine using a computer if you had to write in binary code, or driving a car if you had to manually adjust the fuel-air mixture for every acceleration. The absurdity of these scenarios reveals how much we depend on abstraction.

Every technological advancement builds on previous layers of abstraction. The internet exists because programmers didn't need to understand telecommunications infrastructure. Smartphones exist because designers didn't need to fabricate their own processors. Artificial intelligence advances because researchers can focus on algorithms rather than the hardware running them.

Abstraction is what allows human knowledge to compound. Each generation builds on the abstractions created by the previous one, reaching higher without needing to reconstruct everything from scratch.

The Essential Idea#

In the end, abstraction is not just important to computer science—it is what makes computer science possible. It's the organizing principle that lets us build complex systems, collaborate across teams and decades, and keep pushing the boundaries of what technology can do.

The next time you tap your phone or click a link, take a moment to appreciate the tower of abstractions beneath that simple gesture. From quantum physics to human interface design, from transistors to touchscreens, abstraction is what bridges the gap between incomprehensible complexity and everyday usability. It's the idea that made the modern world possible.

Abstraction isn’t just a tool for experts—it’s the invisible thread connecting every technological advance we enjoy. By appreciating its role, we gain a deeper understanding of both computer science and the world around us.